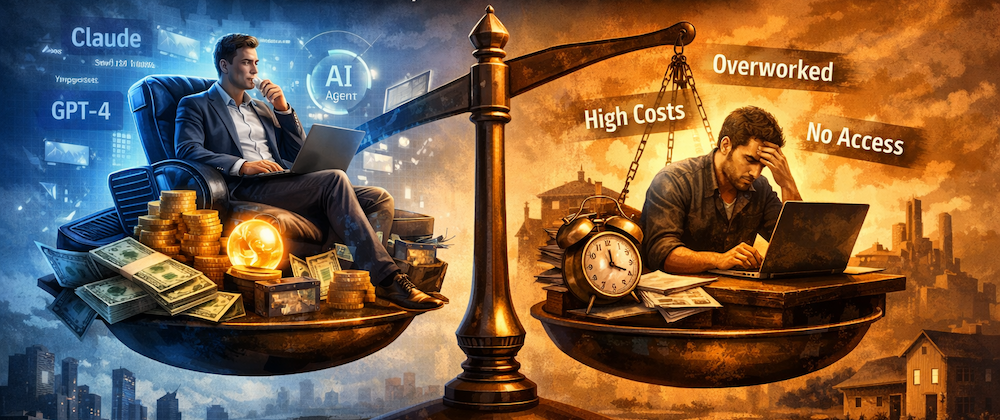

The argument that AI Agents are the future is flawed

🤔 The thing about how much AI agents and tools have improved is that I’m finding myself in a weird spot. I’m always working. Even when I’m not working, I’m planning and brainstorming in Claude Projects so I can use the spec and plan files later in Claude Code. In a recent interview with The Pragmatic Engineer, Mitchell Hashimoto, the founder of HashiCorp said his “new rule for building software: always have an agent running in the background doing something.” (link) ...