Do trust and accountability go hand in hand?

This is something I’ve been chewing on over the weekend. And I don’t know really, but here’s where I’m coming from.

One of my side projects at PiForge at the moment is building a system around Agentic Engineering using team lead principles I’ve honed over years, and applying how human teams communicate and organize over the SDLC to agents (i.e. start with a spec, write tickets on a kanban board, grab the next highest priority, work till done, test, PR, release, document).

The problem is, even with all the safeguards and process in place, I’m still double and triple checking everything. Partly because this framework is a work in progress and I don’t know how well it’s working yet, but something else is nagging at me…

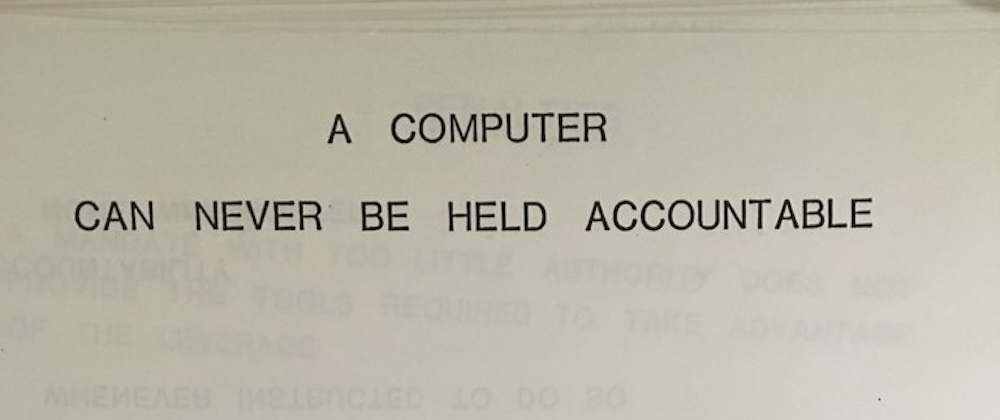

A 1979 IBM training manual famously declared:

“A computer can never be held accountable, therefore a computer must never make a management decision.”

This sentiment emphasizes that accountability lies with humans, not machines, as computers lack moral agency. And this is what’s bothering me.

Even if my tool and process is flawless, I can’t hold an AI Agent accountable for the work in the same way that I can a human. And for me, that means I don’t trust it.

When I’m working with a single agent, I’m in the loop. The buck stops with me. But for an army of agents, I don’t quite know where that line is yet… Is trust without accountability even possible? Or am I trying to solve a fundamentally human problem with a technical solution?

When you delegate to a junior dev, you trust them because:

- They can learn from mistakes

- They can be held responsible

- They have skin in the game

- There are consequences for repeated failures

With AI agents? None of that applies. They don’t learn from your corrections (at least not in a persistent way, which I’m also trying to tackle alongside other issues). They can’t be “held responsible.” They have no skin in the game. There are no consequences.

So maybe the real question isn’t “how do I make my agent system trustworthy” but rather “how do I structure accountability when the actor can’t be held accountable?”

I don’t have the answer yet. But I’m curious if others building with agent teams are wrestling with this same tension.

How are you thinking about trust and accountability in your agent workflows? Where do you draw the line between automation and oversight?

.

.

.

#AI #AIAgents #Leadership #TechEthics #SoftwareEngineering #AgenticAI #TrustAndAccountability #AIinBusiness #TechLeadership #Automation #PiForge

This post was originally published on LinkedIn